You are viewing an older version of this section. View current production version.

Columnstore

MemSQL supports storing and processing data using two types of data stores: a completely in-memory rowstore and a disk-backed columnstore. The MemSQL rowstore and columnstores differ both in storage format (row, column) and in storage medium (RAM, disk - with flash or SSD recommended). MemSQL allows joining rowstore and columnstore data, thus allowing you to merge real-time and historical data together in a single query.

The rowstore is typically used for highly concurrent OLTP and mixed OLTP/analytical workloads. The columnstore is used primarily for analytical applications where the queries mainly involve aggregations over large datasets that do not fit in memory. In these cases the columnstore performs much better than the rowstore.

The decision framework for choosing a rowstore or a columnstore is included later in this section. In general, since rowstores support a larger variety of workloads, they are a good starting point.

This section provides a conceptual overview of MemSQL’s columnstore and includes considerations for optimizing your database performance using the columnstore.

Creating a Columnstore Table

The columnstore is enabled by adding a CLUSTERED COLUMNSTORE index to a table. If a clustered columnstore index is defined on a table, the table itself is physically stored using the columnstore. Currently, MemSQL supports one columnstore index per table. Combining columnstore indexes with other index types, except shard keys, is not currently supported.

Here is an example of a query that creates a columnstore table:

CREATE TABLE products (

ProductId INT,

Color VARCHAR(10),

Price INT,

dt DATETIME,

KEY (`Price`) USING CLUSTERED COLUMNSTORE ,

SHARD KEY (`dt`, `ProductId`)

);

As with any other MemSQL table, we define a SHARD KEY to explicitly control the data distribution. We define it on ProductId since sharding on a high cardinality identifier column generally allows for a more even distribution and prevents skew. It is also possible to randomly distribute data by either omitting the shard key, or defining an empty shard key SHARD KEY().

To create a columnstore table, you must include a CLUSTERED COLUMNSTORE key in the definition. Note that you can only have one CLUSTERED COLUMNSTORE key as well as the SHARD KEY. With the exception of metadata-only unenforced unique keys, you cannot add any other key, including PRIMARY KEY, to the columnstore table.

If a CLUSTERED COLUMNSTORE key is defined on a table, the rows of that table will be stored in a highly compressed columnar format on disk. We describe the details of the format below. While MemSQL can execute any query on a columnstore table that it can execute on a rowstore, some queries are more suitable for columnstore tables than others. Some queries that can benefit from using columnstore include:

- Queries that scan several columns out of a table with many columns. Columnstore table will only materialize columns that it actually needs to perform the query.

- Queries that scan a lot of data in

CLUSTERED COLUMNSTOREkey order. Because the data is highly compressed, and is stored in order, such a scan can very efficiently utilize the processor cache. - Joins between two columnstore tables on the

CLUSTERED COLUMNSTOREkey. Such a join can be executed as a merge join, resulting in a good performance and low memory overhead. - Queries with high selectivity filters on the

CLUSTERED COLUMNSTOREkey. By leveraging a technique calledsegment elimination, MemSQL can open only those row segments that actually contain relevant rows, significantly improving the performance of queries with high selectivity.

Columnstore tables are also not constrained by the amount of available memory, unlike rowstore tables.

Conceptual Differences Between Row and Column Stores

Row-oriented stores, or “rowstores” are the most common type of data stores used by relational databases. As the name suggests, a rowstore treats each row as a unit and stores all fields for a given row together in the same physical location. This makes rowstores great for transactional workloads, where the database frequently selects, inserts, updates, and deletes individual rows, often referencing either most or all columns.

Column-oriented stores, or “columnstores” treat each column as a unit and stores segments of data for each column together in the same physical location. This enables two important capabilities. One is to scan each column individually, in essence, being able to scan only the columns needed for the query, with good cache locality during the scan. The other capability is that columnstores lend themselves well to compression. For example, repeating and similar values can easily be compressed together. A simplified example is shown here:

Logical table and rowstore representation

| ProductId | Color | Price |

|---|---|---|

| 1 | Red | 10 |

| 2 | Red | 20 |

| 3 | Black | 20 |

| 4 | White | 20 |

Columnstore

| ProductId |

|---|

| 1 |

| 2 |

| 3 |

| 4 |

| Color |

|---|

| Red x 2 |

| Black |

| White |

“x 2” indicates that the value is repeated twice.

| Price |

|---|

| 10 |

| 20 x 3 |

“x 3” indicates that the value is repeated three times.

By physically organizing data by columns, the database is able to scan and aggregate data for individual columns very quickly, simply because less data needs to be scanned. This makes columnstores well-suited to analytical workloads. The trade-off of using the columnstore is that it does not lend itself well to manipulating individual rows quickly. Columnstores are usually batch-loaded for optimal performance, although small-batch and streaming insert to columnstores can also perform well. The combination of efficient batch loading and processing of analytic queries make columnstore highly suitable for data warehousing scenarios.

Choosing a Columnstore or Rowstore

The following table enumerates the strengths and intended uses of each storage engine.

| In-Memory Rowstore | Flash, SSD, or Disk-based Columnstore |

|---|---|

| Operational/transactional workloads | Analytical workloads |

| Fast inserts and updates over a small or large number of rows | Fast inserts over a small or large number of rows |

| Random seek performance | Fast aggregations and table scans |

| Updates/deletes are frequent | Updates/deletes are rare |

| Compression |

How the MemSQL Columnstore Works

In MemSQL there are a few concepts that are important to understand in order to make the best possible use of the columnstore:

- Clustered columnstore index - A clustered columnstore index represents the full table structure and is its primary storage.

- Columnstore key column(s) - When creating a columnstore index, one or more columns need to be defined as the key column(s) for the columnstore index. The data in the columnstore is stored in key column order. Selecting a good columnstore key can significantly improve performance as covered later in this section.

- Row segment - A row segment represents a logical set of rows in the columnstore index. MemSQL stores in memory the metadata for each row segment, which includes the total row count for the segment and a bitmask tracking which rows have been deleted.

- Column segment - Each row segment contains a column segment for every column in a table. The column segment is the unit of storage for a columnstore table and contains all values for a specific column within the row segment. Values in column segments are always stored in the same logical order across column segments within the same row segment. MemSQL stores in memory the metadata for each column segment, which includes the minimum and maximum values contained within the segment. This metadata is used at query execution time to determine whether a segment can possibly match a filter, a process known as

segment elimination. - Sorted row segment group - A sorted row segment group represents a set of row segments that are sorted together on the columnstore key column(s). This means that within a sorted row segment group there will be no row segments with overlapping value ranges for the column(s) that make up the key for the columnstore index. New segment groups are formed when more segments are created after running

INSERT,LOAD, orUPDATEqueries on the table. Information on how this affects query performance and how to minimize the number of sorted row segment groups is covered later in this section.

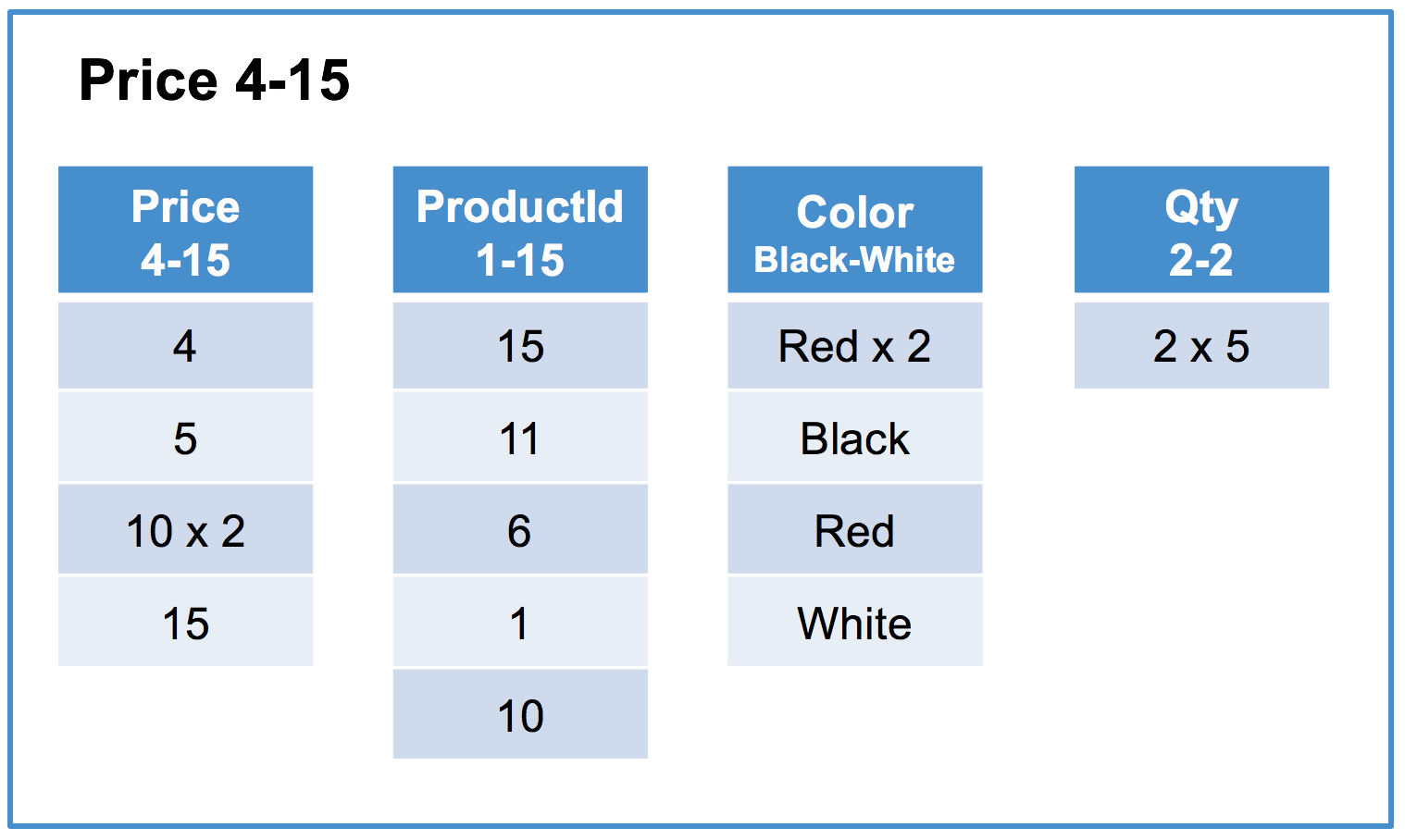

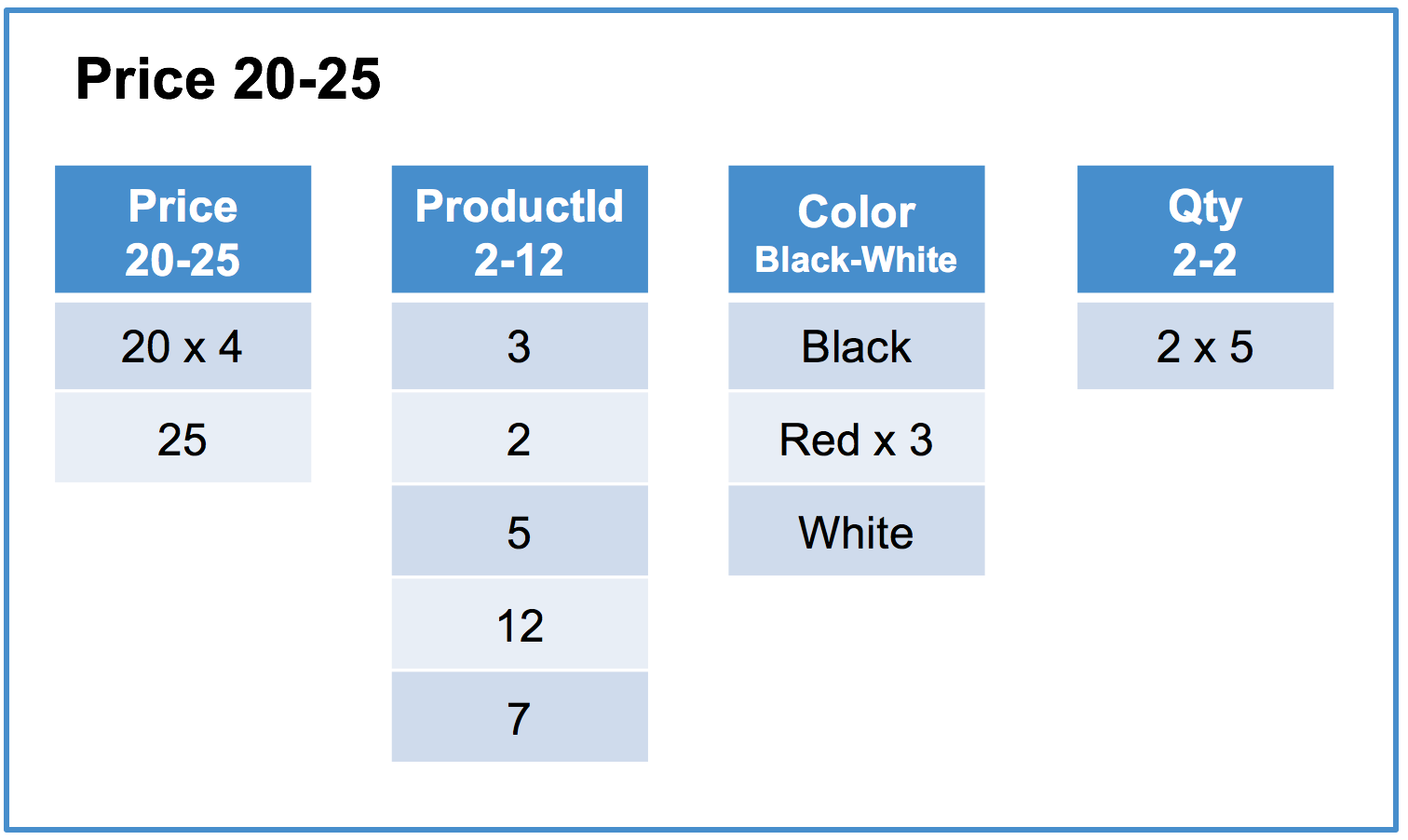

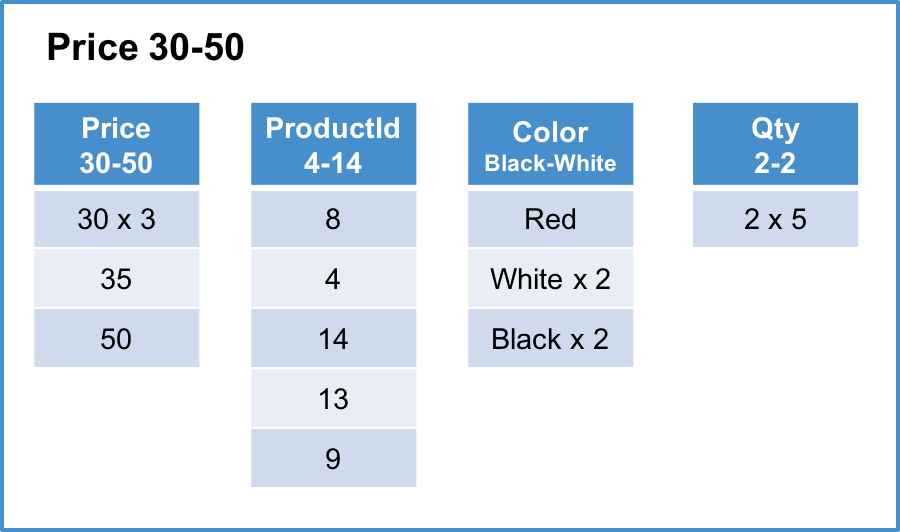

Below is an example of a MemSQL columnstore index on the Products table using the Price column as its key.

Column segments typically contain on the order of hundreds of thousands of rows. In this example, the segment size is 5 rows for readability.

Logical table and rowstore representation

| ProductId | Color | Price | Qty |

|---|---|---|---|

| 1 | Red | 10 | 2 |

| 2 | Red | 20 | 2 |

| 3 | Black | 20 | 2 |

| 4 | White | 30 | 2 |

| 5 | Red | 20 | 2 |

| 6 | Black | 10 | 2 |

| 7 | White | 25 | 2 |

| 8 | Red | 30 | 2 |

| 9 | Black | 50 | 2 |

| 10 | White | 15 | 2 |

| 11 | Red | 5 | 2 |

| 12 | Red | 20 | 2 |

| 13 | Black | 35 | 2 |

| 14 | White | 30 | 2 |

| 15 | Red | 4 | 2 |

Columnstore - Each row segment contains one column segment per column in the table. For simplicity, this example contains the initial load of the table and has a single sorted row segment group.

Sorted row segment group #1 of 1

Row segment #1 of 3

Row segment #2 of 3

Row segment #3 of 3

“x N” indicates that the value is repeated N times.

Creating Efficient Columnstore Queries

Queries against tables with a columnstore index in MemSQL can take advantage of five characteristics of a columnstore index:

- All queries will be able to take advantage of the fact that only the column segments containing columns referenced in the query will need to be scanned, as well as the fact that the columnstore’s compression causes less data to need to be scanned. Taking the above table as an example. The query

SELECT SUM(Qty) FROM Products;will only need to scan the threeQtycolumn segments, each of which only contain a single value due to the compression. - Some queries can be performed simply by reading the in-memory metadata for column segments referenced in the query. As an example, the query

SELECT COUNT(*) FROM Products;will only need to read the row count and delete bitmask for all row segments to produce its result, completely eliminating the need to read column segments from disk. Queries that use theMINorMAXaggregates can eliminate the need to read column segments from disk if no delete has been performed against the column segment. - Some queries can reduce the number of segments that need to be read from disk by eliminating these segments based on the segment metadata (min and max values for the segment). The efficiency of this method depends on what percentage of segments can actually be eliminated.

- For queries that filter on the key columns of the columnstore index, segment elimination is typically very efficient as segments within each row segment will not cover overlapping value ranges. For example, in the above table the query

SELECT AVG(Price), AVG(Qty) FROM Products WHERE Price BETWEEN 1 AND 10;will eliminate all segments exceptrow segment: #1 column segment: Price 4-15androw segment: #1 column segment: Qty 2-2which will be scanned. - For queries that filter on the non-key columns that don’t correlate with the key columns, segment elimination can be much less valuable as segments within each row segment can cover overlapping value ranges. For example, in the above table, the query

SELECT AVG(Price) FROM Products WHERE Color = 'Red';will be forced to scan all segments for thePriceandColorcolumns as no segment can be eliminated when the valueRedis contained in all segments of theColorcolumn.

- For queries that filter on the key columns of the columnstore index, segment elimination is typically very efficient as segments within each row segment will not cover overlapping value ranges. For example, in the above table the query

- Queries that join tables on columns that are the index columns of a columnstore index can be performed very efficiently through the use of a merge join algorithm allowing the join to be performed by simply scanning two segments that need to be joined in lock-step.

- Certain types of data allow filters and group-by operations to be performed without decompressing data from its serialized-for-disk format. This greatly improves performance by reducing the amount of data that need to be processed, especially when the cardinalities of the involved columns are low. This optimization is only performed in cases when execution run time would be improved. See Understanding Operations on Encoded Data for more information.

Writing Columnstore Data

Unlike other columnstore implementations, MemSQL supports very fast, small-batch writes (such as single row inserts) directly into columnstore tables. This is implemented by storing newly written rows in a row-oriented skiplist before flushing them to the column-oriented format. This is all handled automatically behind the scenes, and rows are visible to reads as soon as they are committed to the row-oriented skiplist.

When using MemSQL columnstore indexes, the following are changes that can occur against the data store.

- Insert - Inserts into a columnstore index will either go into the rowstore-backed or create a new columnstore-backed row segment. This is automatically determined by the engine based on heuristics involving the size of the insert and the current state of the columnstore index. If an insert is large enough that it would write directly to the columnstore, it is an optimized insert. An optimized insert will load a set of data and pre-sort it in the order of the columnstore key column(s); this will cause the load to always create a single new row segment. By default,

INSERTorLOAD DATAqueries writing more than16 MBper partition will produce an optimized insert. - Delete - Deleting a row in a columnstore index causes the row to be marked as deleted in the segment metadata, leaving the data in place within the row segment. Segments which only contain deleted rows are removed, and the optimization process covered below will compact segments that require optimization.

- Update - An update in a columnstore index is internally performed as a delete followed by an insert within a transaction. As with inserts, small enough updates will write to the rowstore-backed row segment.

- Optimization - MemSQL has optimization routines that run automatically and can also be started manually. These routines attempt to automatically merge row segments together in order to improve query efficiency. DML statements can be performed while columnstore optimizations take place. For more information, see the OPTIMIZE TABLE topic.

Managing Columnstore Segments

A columnstore table will have the best performance if the rows in the table are in global sorted order across all the row segments. In reality, maintaining such an order is not feasible in the presence of continuous writes.

MemSQL uses an advanced algorithm that allows it to maintain the order as close to sorted as possible, while data is being ingested or updated. Such a process is called a background merger and is constantly running in the background if the order of the row segments can be improved.

Background merger is optimistic, in that if at any point it tries to move around data which is also being changed by a concurrent UPDATE or DELETE query, it will discard all the work it has done so far and start over. It works on a small chunk of data at a time, so

it is always a relatively small amount of work that is being discarded. However, in the presence of a very heavy update workload it can be a significant slowdown compared to a pessimistic merger, which locks the row segments it is currently processing. A user can manually trigger a pessimistic merger by running an OPTIMIZE TABLE command. We will explain below how to decide whether such a command is necessary, and how to run it.

MemSQL uses a concept of a sorted row segment group to describe a set of row segments that are sorted together. Row segments form a sorted row segment group if and only if there is an order on the row segments such that for each row segment the smallest row in it is no smaller than the largest row in any row segment before it. Here and below when we say that one row is smaller than another row, we mean that the values of columns of the CLUSTERED COLUMNSTORE key of that row are smaller than those of the other row.

If the data had a perfect global order, it would consist of a single sorted row segment group. If the data is in a completely random order, it is likely to comprise as many sorted row segment groups as there are row segments. The goal of the background merger is to reorganize the rows among row segments in such a way that the number of sorted row segment groups is as small as possible.

To inspect the current state of the sorted row segment groups of a particular table, run the SHOW COLUMNAR MERGE STATUS FOR <table_name> command:

SHOW COLUMNAR MERGE STATUS FOR groups;

****

+---------------------------------------------------------------------------+

| Merger | State | Plan | Progress | Partition |

+---------------------------------------------------------------------------+

| (Current groups) | NULL | 741,16,1 | NULL | 0 |

| (Current groups) | NULL | 782,20 | NULL | 1 |

| (Current groups) | NULL | 701,40,5 | NULL | 2 |

| (Current groups) | NULL | 326,207,123,37,21,19,17 | NULL | 3 |

+---------------------------------------------------------------------------+

Let’s look closely at the first row of the result. According to it, the slice of the table that is stored on partition 0 has three sorted row segment groups, one consists of 741 row segments, one consists of 16 row segments, and one consists of a single row segment - a total of 758 row segments. Consider the impact of such a split into sorted row segment groups on a very simple query like

SELECT * FROM groups WHERE user_group = 15;

By the definition of the sorted row segment group, the very first sorted row segment group will have at most one row segment that contains rows with user_group equal to 15, unless user_group = 15 is on the boundary of two row segments, or if there is a large data skew and several row segments consist only of rows with user_group = 15. Similarly, at most one row segment in the second sorted row segment group contains relevant rows, and the only segment of the third sorted row segment group might also contain relevant rows. This way, only three row segments out of the total of 758 will be opened and materialized. While the query in this example is very simple, similar reasoning works for significantly more complex queries.

Now take a look at the sorted row segment groups on partition 3. Clearly, it is significantly less optimized than the remaining three, and a select query like the one shown above will result in materializing 8 row segments. If the background merger is enabled, and no workload is running concurrently, within several seconds this partition would get optimized. However, in the presence of a heavy workload, the optimistic background merger might fall behind. In this case, it might be reasonable to manually trigger a pessimistic merger by calling:

OPTIMIZE TABLE groups

If we run SHOW COLUMNAR MERGE STATUS while OPTIMIZE TABLE is being executed, we might see the manual merger in action:

SHOW COLUMNAR MERGE STATUS FOR groups;

+------------------------------------------------------------------------------+

| Merger | State | Plan | Progress | Partition |

+------------------------------------------------------------------------------+

| (Current groups) | NULL | 741,16,1 | NULL | 0 |

| (Current groups) | NULL | 782,20 | NULL | 1 |

| (Current groups) | NULL | 701,40,5 | NULL | 2 |

| (Current groups) | NULL | 326,207,123,37,21,19,17 | NULL | 3 |

| Manual Merger | Working | 326+207+123+37+21+19+17 | 53.12% | 3 |

+------------------------------------------------------------------------------+

What this new row indicates is that there is a manual merger running on the partition 3, and that at this time it has done 53.12% of the work.

When the merger is done, the table now is in a better shape:

SHOW COLUMNAR MERGE STATUS FOR groups;

****

+------------------------------------------------------------+

| Merger | State | Plan | Progress | Partition |

+------------------------------------------------------------+

| (Current groups) | NULL | 741,16,1 | NULL | 0 |

| (Current groups) | NULL | 782,20 | NULL | 1 |

| (Current groups) | NULL | 701,40,5 | NULL | 2 |

| (Current groups) | NULL | 730,20 | NULL | 3 |

+------------------------------------------------------------+

Note that at no point were any of the partitions merged into a single sorted row segment group in this example. The reason for that is that both optimistic and pessimistic merger use an advanced algorithm that is optimized to do small amortized chunks of work in the presence of concurrent writes and maintain data in a few sorted row segment group, rather than to attempt to merge all the data into a single sorted row segment group. In cases when it is acceptable to sacrifice some time on data ingestion to achieve even higher SELECT performance, it is possible to run a manual command that merges data on each partition into a single sorted row segment group:

OPTIMIZE TABLE groups FULL;

SHOW COLUMNAR MERGE STATUS FOR groups;

****

+----------------------------------------------------------+

| Merger | State | Plan | Progress | Partition |

+----------------------------------------------------------+

| (Current groups) | NULL | 758 | NULL | 0 |

| (Current groups) | NULL | 802 | NULL | 1 |

| (Current groups) | NULL | 746 | NULL | 2 |

| (Current groups) | NULL | 750 | NULL | 3 |

+----------------------------------------------------------+

At this time any highly selective select will materialize only one row segment per partition.

Unlike OPTIMIZE TABLE <name>, which takes amortized time proportional to the size of recently loaded data, OPTIMIZE TABLE <name> FULL always takes time in the order of magnitude of the size of the entire table, unless data in that table is already sorted.

When inserting a small amount of rows into the columnstore table, an in-memory rowstore-backed segment is used to store the rows. As this rowstore-backed segment fills, the background flusher periodically will flush these rows to disk. A rowstore-backed segment can be flushed to disk manually by running OPTIMIZE TABLE <table_name> FLUSH.

OPTIMIZE TABLE t FLUSH;

Advanced Columnstore Configuration Options

Configuring segment size in columnstore tables

In MemSQL, data in a columnstore table are organized into multiple row segments. For certain workloads, the size of row segments significantly affects performance. Considerations include:

- In general, larger segments compress better than smaller ones. This leads to less disk usage and faster scan across the table.

- On the other hand, smaller segments could benefit more from segment elimination. As a result, queries with highly selective filters on the columnstore index run faster with smaller segments.

In MemSQL, the default size of the row segments is controlled by the global variable columnstore_segment_rows. By default, columnstore_segment_rows has a value of 1024000, meaning that each segment contains 1024000 rows by default.

-

The variable

columnar_segment_rowsfrom previous MemSQL versions was deprecated in favor ofcolumnstore_segment_rows.columnar_segment_rowsexists as an alias tocolumnstore_segment_rows. -

A cluster upgraded from a version before 6.0 will have the previous default value of

102400forcolumnstore_segment_rows. See Upgrading MemSQL to 6.0 for more information.

In addition to the global variable, it is possible to overwrite the global setting and set the segment size for a specific columnstore table. This can be done during the table creation, or by altering an existing table. For example:

CREATE TABLE t (id INT, KEY (id) USING CLUSTERED COLUMNSTORE WITH (columnstore_segment_rows=100000));

SHOW CREATE TABLE t;

****

+-------+---------------------------------------------------------------------------------------------------------------------------------------------------------------+

| Table | Create Table |

+-------+---------------------------------------------------------------------------------------------------------------------------------------------------------------+

| t | CREATE TABLE `t` (

`id` int(11) DEFAULT NULL,

KEY `id` (`id`) /*!90619 USING CLUSTERED COLUMNSTORE */ /*!90621 WITH(COLUMNSTORE_SEGMENT_ROWS=100000) */

) |

+-------+---------------------------------------------------------------------------------------------------------------------------------------------------------------+

Now, modify the value of columnstore_segment_rows for this columnstore. The MODIFY KEY keyName clause identifies

the index whose settings are to be changed (in this case, the columnstore index).

ALTER TABLE t MODIFY KEY id SET (columnstore_segment_rows=20000);

SHOW CREATE TABLE t;

****

+-------+--------------------------------------------------------------------------------------------------------------------------------------------------------------+

| Table | Create Table |

+-------+--------------------------------------------------------------------------------------------------------------------------------------------------------------+

| t | CREATE TABLE `t` (

`id` int(11) DEFAULT NULL,

KEY `id` (`id`) /*!90619 USING CLUSTERED COLUMNSTORE */ /*!90621 WITH(COLUMNSTORE_SEGMENT_ROWS=20000) */

) |

+-------+--------------------------------------------------------------------------------------------------------------------------------------------------------------+

Configuring the rowstore-backed segment size in columnstore tables

In addition to the on-disk segments, each MemSQL columnstore also has an in-memory rowstore-backed segment. Small-batch inserts write to the rowstore-backed segment first, while the background flusher process periodically compresses those recently inserted rows and creates on-disk segments.

The size of the rowstore-backed segment is controlled by the global variable columnstore_flush_bytes. The background flusher process starts to create on-disk segments when the amount of data in the rowstore-backed segment exceeds columnstore_flush_bytes, with a default of 32 MB. Additionally, insert and load operations are considered small-batch if they write to each partition less than columnstore_flush_bytes * columnstore_disk_insert_threshold, which is by default 16 MB at a time.

The columnstore_disk_insert_threshold value is a fractional value with a default of 0.5. It controls the minimum size of a disk-backed row segment created by insert and load operations. Note that if OPTIMIZE TABLE FLUSH is manually run, the minimum segment size can be much smaller.

Considerations about tuning the rowstore-backed segment size include:

- The rowstore-backed segment is stored in-memory. Therefore, the table consumes less memory when the rowstore-backed segment is smaller.

- The

background flusherprocess can write more rows to disk at once if the rowstore-backed segment is larger, reducing the number of disk write operations during data ingestion.

Similar to columnstore_segment_rows, the columnstore_flush_bytes value can also be configured per-table with the following syntax:

CREATE TABLE t2 (id INT, KEY (id) USING CLUSTERED COLUMNSTORE WITH (columnstore_flush_bytes=4194304, columnstore_segment_rows=100000));

ALTER TABLE t2 MODIFY KEY id SET (columnstore_flush_bytes=8388608);

Prefetching

When a query matches multiple segments which are not currently cached in memory, it can benefit from prefetching. Prefetching improves performance on these types of queries by requesting the file from disk for the next segment while processing the rows of the current segment. Prefetching is enabled by default for all MemSQL columnstore tables.

System Recommendations for Optimal Columnstore Performance

See the Columnstore Recommendations section of Installation Best Practices.

Query Processing Operations on Encoded Data

MemSQL performs some query processing operations directly on encoded data in columnstore tables. Columnstore data is stored encoded in a number of different forms, including dictionary encoding, run-length encoding, and value encoding. For these encodings, certain operations, including filtering and aggregation, can be run very efficiently, through the use of special processing techniques, as well as Single-Instruction, Multiple-Data (SIMD) instructions on processors that support the Intel AVX2 instruction set.

For more information and examples of these operations, see Understanding Operations on Encoded Data.